Ion Burcea

(Tetlock, Philip E., and Dan Gardner. Superforecasting: The Art and Science of Prediction. First edition. New York: Crown Publishers, 2015).

We are all forecasters…

We are all forecasters, and we base our daily routines on all kinds of small scale predictions. We predict that there will be a traffic jam at peak hour, so we plan accordingly and avoid driving at that time, and we expect that the rainy forecast for tomorrow is accurate, hence we decide to post pone the organized trip for another time. Such predictions are usually successful, since traffic jams are easily predictable and weather forecasts are trustworthy. But when it comes to more complex events, such as political or financial ones, we might not be as successful. When it comes to such matters, common sense seems to dictate that forecasts are best left to well-informed experts.

How many total cases of COVID-19 worldwide will be reported/ estimated as of 31 March 2021? What will be the U.S. civilian unemployment rate for June 2021? What will be the end-of-day closing value for the South Korean won (₩) against the U.S. dollar on 15 July 2020 [1]? Important parties such as corporations, banks and intelligence agencies want an answer now to this kind of questions, and it is clear that they will not just ask anybody to answer them. They will look to experts. But how good are experts at foreseeing the future?

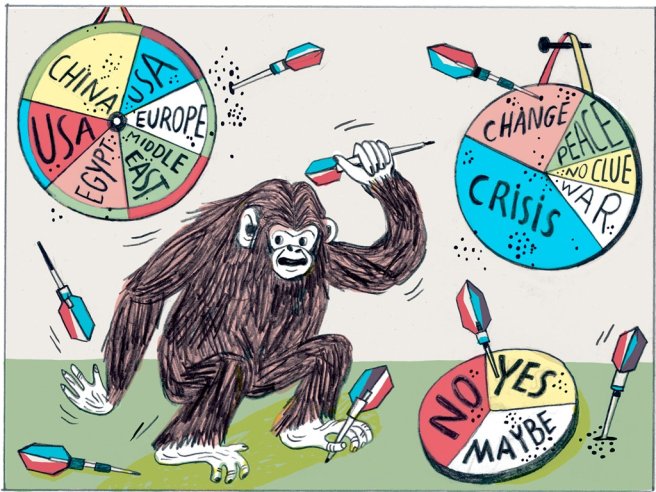

Not really good. In 2005, Philip Tetlock, political psychologist and now a Wharton Professor at University of Pennsylvania, published the findings of a research program he started in the 1980s, which had as its main goal to provide a clear assessment of how reliable are expert judgments in forecasting. He gathered 284 experts, all highly educated and involved from different professional angles in the political and economical trends of the day, and asked them to assign probabilities to possible outcomes related to current affairs; he amassed 82,361 forecasts, and throughout the years he compared them with actual outcomes and assessed their accuracy. The most-well known conclusion of the study, still very much quoted in the press, was that “chimps randomly throwing darts at the possible outcomes would have done almost as well as the experts” [2]. That is, the average expert in the study did not prove more accurate than a random-guessing algorithm [3].

But this is not the whole story. In fact, it misses a lot of the insight the research delivered. First of all, the pundits did better than the “mindless algorithm” on short-range questions, which only asked to foresee events up to a year, and they dropped in accuracy as they answered more long-range questions. Moreover, and this is the most important part, not all forecasters did the same; some did better than the others and actually beat the chimp, albeit by a low margin. As Tetlock himself put it, “however modest their foresight was, they had some” (2015, 68). If some forecasters were consistently better than the others, then maybe whatever skills they possessed can and should be harnessed in order to produce better forecasts overall. One of Tetlock’s main goals with his latest research program was to do just that.

Bad Predictions

The benchmark for what counts as great work among intelligence agencies is good prediction. Mere data collection is only part of the job; analysts are called to make judgments based on the data and their managers expect to see reliable forecasts in light of which courses of action are planned. Tetlock (2015, 81-85) tells the story of how the U.S. decided their invasion of Iraq in 2003 based on the prediction of the intelligence community with respect to the existence of weapons of mass destruction in Saddam Hussein’s regime. We now know that the prediction turned out to be wrong and the whole operation a fiasco, but Tetlock asks, was it a bad prediction? Did one of the most internationally respected intelligence communities with a budget estimated around 50 billion dollars just get its numbers wrong? This is a sensible question, but, in a sense, it did. Leaving aside suspicions about how the White House might have hijacked its intelligence, Tetlock cites Robert Jervis, Professor at Columbia University and author of Why Intelligence Fails (2010), and subscribes to his conclusion: the prediction was both wrong and reasonable. It was wrong because the outcome contradicted what was predicted, and it was reasonable because, he argues, a lot of information pointed into the predicted direction.

Still, there was something very bad about it, and that was the confidence with which the prediction was made [4]. The evidence at hand was important, but not decisive, and so the prediction should not have overestimated it. As Tetlock puts it (2015, 84), “If some in Congress had set the bar at ‘beyond a reasonable doubt’ for supporting the invasion, then a 60% or 70% estimate that Saddam was churning out weapons of mass destruction would not have satisfied them.” The invasion might have been avoided.

This example is very telling because it points to a crucial insight of what makes a good prediction, and that is how fine-grained it is. Assigning a few less or extra decimals to the probability of some event happening does make a sensible difference, especially in a game of accuracy. The special ingredient here in assessing accuracy is the Brier score. Originally proposed to quantify the accuracy of weather forecasts, the Brier score is a function which gives us the means to measure how far from the real outcome the prediction was [5]. In its first formulation, a forecast can get a score between 0 and 2.0, 0 being the perfect correspondence with reality, while 2.0 being as far as it can get away from reality; the lower the value, the better. It is useful to think of it in betting terms: betting high on some event occurring (assigning a high probability to it), while it does not happen, will make you lose a lot (you get a high Brier score). At the same time, betting high on some event not occurring (assigning a low probability to it), while it does indeed not happen, will make you win a lot (you get a low Brier score). Hence, forecasters get a Brier score for every prediction they make, and their accuracy is determined through the average of all reported scores [6].

Illustration Katia Fouquet. Source: [2].

Improving Intelligence Through Tournaments

What Brier score would the U.S. intelligence community get in the case of weapons-of-mass-destruction-in-Iraq question? They would get a high Brier score, i.e. they would score badly. Their prediction was overconfident – judging by the language they used (see [4]), – and the Brier score is there to penalize such overconfidence when outcomes contradict it. Doing well on the Brier scale, Tetlock emphasizes throughout the book, is a matter of decimals: always adjusting the probability you assign, in light of the evidence you come about, is a key aspect of good forecasting. Superforecasters who start with a 20% probability of some event, for example, will be ready to add or subtract 5% if some news on the matter point in that direction.

But what exactly was the Brier score of the U.S. intelligence community on that question and what is its Brier score more generally? How accurate are they? Well, simply put, no one knows, and that is because no one measured their accuracy. Measuring accuracy is not simply a matter of saying that there is a 70% chance of rain tomorrow and then waiting to see if it rains or not (that is why a device like the Brier score was designed in the first place). As Tetlock points out (2015, 57), this is a mere fallacy. What we would need to do to check whether it is accurate is to re-run the day, perhaps a hundred times, and see whether it rains around 70 out of those 100 re-runs; but we can’t do that. Still, many believe that if some event occurs and someone said it had a “fair chance” of happening, then that is telling enough for how accurate is the forecaster. This kind of approach to forecasting is what kept real measurement from happening, Tetlock warns.

The Intelligence Advanced Research Project Activity (IARPA) was bound to change that. Founded in 2006, IARPA is a U.S. government agency set to “invest in high-risk/high-payoff research that has the potential to provide our [U.S.A] nation with an overwhelming intelligence advantage” [7]. In order to improve the intelligence quality, analyzing data and forecasting on its basis had to be improved, and this asked for serious accuracy metrics and the forecasts to be systematically assessed. More importantly, they wanted to know literally how the overall accuracy of agents’ forecasts can be improved. The way they decided to look for answers is particularly interesting: they organized a tournament where teams of researchers could join and offer their best shot at it. MIT showed up. University of Michigan showed up. Philip Tetlock, probably the most well prepared for the task given his prior major research project, along with his partners Barbara Mellers (UPenn) and Don Moore (UC Berkeley) showed up, and put together the team and research program known as the Good Judgment Project (GJP).

Good Judgment Project

The first tournament took place in 2011. The questions IARPA had for its participants were mostly short-ranged with an outcome expected within a year, drawing perhaps from the lessons of Tetlock’s earlier research on expert political judgment and its limited foresight; and they were geopolitical in character, “such as whether certain countries will leave the euro, whether North Korea will reenter arms talks, or whether Vladimir Putin and Dmitri Medvedev would switch jobs”[8]. All forecasters were allowed to update their predictions as time passed and the deadline approached, but every adjustment was considered a different forecast, and every forecast went into his personal overall Brier score. Keeping a low Brier score was in everyone’s interest.

The forecasts the teams produced were compared to the forecasts of the control group IARPA put together: a random team of forecasters with no special training. The research teams could do everything they considered necessary to come up with predictions as accurate as possible. Their objective: beat the control group – their “wisdom as a crowd” – by 20%. How did the GJP do? They beat the control group by 60% and other teams (four others) by 40%. In the following year, IARPA organized another tournament, and GJP won again, beating the control group by 78%. In fact, GJP was doing so well by the second year that in subsequent years IARPA decided to drop the other academic teams (2015, 18).

“The tournament was not, however, just a horse race”, as Tetlock et al. (2014) put it. Every research program ran its own experiments and measurements, and compared different groups of forecasters. In its first year, GJP worked with 3,200 volunteers and assigned them randomly in groups where different hypotheses related to psychological drivers of accuracy were tested. Among its findings, probably not unexpected, was that groups who received training in cognitive-debiasing did better overall and that teamwork generally boosted accuracy [9]. But the main finding of GJP were the superforecasters – the top 2% of the participants who proved to be the most accurate; each year they formed elite teams and never regressed. Before discussing what makes them so super, I will dwell some more on the methods the GJP used in coming to their predictions.

Humans and Algorithms

I mentioned that one of the central findings of GJP in its first year of tournament was that teamwork boosted accuracy. Tetlock gives us the figure: “on average, teams were 23% more accurate than individuals” (2015, 201). We should note that in the first year, no superforecaster teams were put together yet. Superforecasters are called “super” for an obvious reason, but when they were assigned to elite teams in the following year, each one registered a boost in its accuracy by 50%; they became much better than they were, and they were pretty good already (2015, 205). The lesson of the first year was highlighted:

- teamwork indeed boosts accuracy.

Let us unpack this because it actually points in two different directions. First of all, predictions of teams were more accurate than individual predictions: what does that mean? Nothing else than that the average prediction of a team was better than individual predictions; the average prediction of the team or the wisdom of the crowd. In fact, GJP winning strategy of the tournament relied on the wisdom of the crowd (2015, 90). Distilling the wisdom of the crowd only takes calculating the simple average of the predictions, but actually GJP did slightly more than that: they calculated the weighted average. Some forecasters proved more diligent and accurate as time passed, so the team’s prediction inclined to their point of view.

Still, why should we trust the crowd? Discussion has been rife about this in the past years, and it has been fueled by interesting results in the social choice literature, mainly surrounding the Jury Theorems and the Diversity Trumps Ability Theorem, but this is not the time to review that literature [10]. Nonetheless, it is worth stating one crucial point in understanding why teams can outperform individuals by significant margins, and that is because judgment aggregation can benefit from the diversity of the points of view of the matter at hand. No forecaster has all the information and evidence necessary in order to achieve certainty on any given question. That information is dispersed asymmetrically and it can often be the case that what one forecaster knows and boosts his confidence in some outcome, some other forecaster does not know, and vice versa. The average of the forecasts, be it simple or weighted, pools that information in the form of a collective judgment, which so happens to be highly accurate. Of course, there are many dangers threatening the crowds and Tetlock dedicates Chapter 9 to discussing how important it is to avoid them. Most important is that the forecasters remain independent and do not succumb to the influence of the others, so that they can still judge according to their own information and evidence.

This brings us to the next important step in the winning strategy of GJP. Teamwork and information sharing was important, and so interaction was an essential part of all that accuracy (even though they didn’t meet face-to-face, but mainly through online discussion forums). Still, one particular shortcoming of sharing information should be noted: it harms diversity; shared and internalized information can lead crowds to think in a uniformized way. Luckily, big crowds (as a reminder, there were 3200 active forecasters in GJP in its first year and not all of them were assigned to teams) are not that good at internalizing all kinds of shared information. Now, if you think about it in this light, a lot of information gets ignored, no matter what. But there is a particularly interesting way to account for some of that ignored information, and that is where the extremizing algorithm becomes, to Tetlock and his team, highly useful.

Even though judgment aggregation does most of the magic, one particular psychological shortcoming of the forecasters impedes its way to high accuracy, and that is underconfidence. Consider a forecaster who has reasonable evidence in favour of the occurrence of some event, and assigns it a probability of 0.8. But, as he gives it a bit more thought, his confidence recedes and thinks that it would be more cautious to back up to 0.7 instead or even a bit lower because he might be missing relevant information. This kind of reasoning is not unusual. The accuracy of the average prediction will suffer from this. But someone who knew that the resulting average is handicapped in this way and, moreover, knew that each forecaster came up with a prediction on his own, would be in a position to infer that the actual average prediction should be made more extreme: if the result was 0.7, it should be made 0.8 or 0.9, or if the result was 0.4, it should be made 0.3 or 0.2 (1 is certainty that it will happen, while 0 is certainty that it won’t happen). The extremizing algorithm does just that and it was used by GJP to augment the crowd’s wisdom in the tournament [11].

A special condition we mentioned in applying the extremizing algorithm is that the predictions are made by independent forecasters. This is again a point which builds on the epistemic diversity of the participants. Every participant has some evidence that points to some side of the 50/50 and judges accordingly, unless his prediction behaviour tries to emulate a coin toss. But, as I already emphasized, those bits of information don’t get shared efficiently across big crowds, and every sensible participant knows that whatever information he might have is not enough – this is what makes him back up in his confidence. Nevertheless, a certain average gets distilled from a big crowd and it points in favour of some outcome. In other words, all those different sources of information, all those tidbits showed the most probable outcome. Extremizing that outcome is a way of simulating what would have happened if everyone knew what everyone else knew – it would have boosted everyone’s confidence in their own judgment. But the extremizing algorithm doesn’t do much in the case of superforecaster teams, and that is because they are particularly good at sharing information (2015, 2010). Efficient teams don’t profit from such simulations – they have a way of pooling all sorts of relevant information. This is the second direction I mentioned in the beginning of this section: not only that teams are more accurate than individuals, but teamwork can actually improve the accuracy of seriously engaged participants.

What Makes Them So Super?

The bulk of Tetlock’s book deals with this very question, so I am not going to spoil it. He discusses whether their success stems from their intelligence and encompassing knowledge of the world (Chapter 5), or from their training in a mathematical background (Chapter 6), or maybe just from them being avid news readers (Chapter 7). The source of their accuracy lies somewhere in between these alternatives, although this doesn’t mean that all superforecasters share the same characteristics. They have different backgrounds and most have a college degree, but not all of them have mathematical training and neither can it be said that they have an encyclopedic knowledge. Still, any time they encounter a question outside their expertise and almost alien to their world – concerning elections in Guinea, for example – they approach it with curiosity and a learning appetite. A good prediction on the matter will ask them to be in touch with the news on the subject, of course, even though no superforecaster spends a lot of time doing it. They are not getting paid for their predictions after all, and surfing the internet for information can be time consuming [12].

Overall, what can be said for sure about them is that they are not the kind to run away from critique. They expose their reasoning to others in order to get access to different perspectives and they know how to admit their mistakes and correct them. In short, they are flexible thinkers. But, as I said, I don’t intend to get into the details of what makes them so performant. The book itself offers a lot of psychological insight on the matter.

By now there must be a question lurking in the air: if they are so super, did they predict the COVID-19 pandemic? Well, I don’t know. Using an expression in vogue right now, we might say that a pandemic is a black swan, meaning that pandemics are so improbable that they are close to impossible to be foreseen. Or, on the contrary, as the very author who coined the expression recently argued [13], pandemics are actually white swans – given our networked world, such catastrophic events had a much more higher occurrence probability than we would like to think. I don’t know what the superforecasters thought about the imminence of pandemics before the whole crisis began, but one can follow on his own their predictions on the evolution of the recovery from the crisis [14].

References

- These are actual questions drawn from the Good Judgment Project website (www.goodjudgment.com) for which superforecasters are asked now to provide predictions.

- More here:https://www.nytimes.com/2012/06/24/opinion/sunday/political-scientistsare-lousy-forecasters.html.

- The whole research project, its methods and its results are described in Tetlock, Philip E. Expert Political Judgment: How Good Is It? How Can We Know? Princeton, N.J: Princeton University Press, 2005.

- This is the excerpt Tetlock cites (2015, 81) from the intelligence community report (National Intelligence Estimates) to the White House, released to the public in October 2002: “We judge that Iraq has continued its weapons of mass destruction programs in defiance of UN resolutions and restrictions. Baghdad has chemical and biological weapons as well as missiles with ranges in excess of UN restrictions; if left unchecked, it probably will have a nuclear weapon during this decade.”

- https://statisticaloddsandends.wordpress.com/2019/12/29/what-is-a-brierscore/.

- The exact process through which accuracy is determined, as it is described in the case of Good Judgment Open at least (a spin-off of the original project where everyone can join and participate in the forecasting challenges), can be found at https://www.gjopen.com/faq.

- https://www.iarpa.gov/.

- https://www.nytimes.com/2013/03/22/opinion/brooks-forecasting-fox.html.

- Tetlock, Philip E., Barbara A. Mellers, Nick Rohrbaugh, and Eva Chen. “Forecasting Tournaments: Tools for Increasing Transparency and Improving the Quality of Debate.” Current Directions in Psychological Science 23, no. 4 (August 2014): 290–95. Findings of other teams are cited here as well.

- The following entries should provide enough material and further readings to anyone interested in the subject: Surowiecki, James. The Wisdom of Crowds: Why the Many Are Smarter than the Few and How Collective Wisdom Shapes Business, Economies, Societies, and Nations. 1st ed. New York: Doubleday, 2004; Page, Scott E. The Diversity Bonus: How Great Teams Pay Off in the Knowledge Economy. Princeton University Press, 2017; Dietrich, Franz & Spiekermann, Kai (2020), “Jury Theorems,” in M. Fricker (ed.), The Routledge Handbook of Social Epistemology (New York and Abingdon).

- Baron, Jonathan, Barbara A. Mellers, Philip E. Tetlock, Eric Stone, and Lyle H. Ungar. “Two Reasons to Make Aggregated Probability Forecasts More Extreme.” Decision Analysis 11, no. 2 (March 19, 2014): 133–45.

- Several superforecasters are interviewed in this video and asked to talk about their approach to the forecasting business; they also share interesting advice: https://www.youtube.com/watch?v=6POQjSjIXWk.

- https://www.newyorker.com/news/daily-comment/the-pandemic-isnt-ablack-swan-but-a-portent-of-a-more-fragile-global-system.

- https://goodjudgment.com/covidrecovery/.